By John B. Bennison ’74

When I arrived at Amherst in the fall of 1970, my first priority, after locating my dorm and the dining hall, was to stake out the route to my campus mailbox—a connection to the world outside. I found the mailroom in the basement of Converse Hall, a former library that was, by then, an administrative building.

For much of my freshman year I checked my mail with no inkling that just around a corner was a classroom-sized space that would become the epicenter of my Amherst experience. I had no idea that lifelong friendships and the foundation of my professional life were waiting there. As freshmen, there was a lot we didn’t know.

It wasn’t just that we were ignorant; times were different. Life in 1970 was low-tech. Digital watches wouldn’t be produced for two more years. I came to college with a slide rule, a chess set and some drafting tools. The two devices that define today’s material culture were utterly absent: no one—student or professor—had anything like a personal computer or wireless phone.

I need to digress about phones. My class arrived at college a year before the first email would be sent over the ARPANET and a month after Richard Nixon signed an act to replace the Post Office Department with an independent U.S. Postal Service. The telephone was in its heyday.

Before Amherst, I’d not had my own phone. That a freshman dorm room would include a telephone seemed an improbable luxury, but each room in Stearns Hall had one. This was long before the breakup of AT&T, and phones did not have modular jacks. Instead, a standard-issue black desk phone was permanently wired into the wall. And its rental charge was just as permanently embedded in my phone bill.

To my roommate’s dismay, I took our room phone apart and reassembled it; I casually stress-tested the plastic components. That rented phone was built to last. At a time when hippies disparaged the materialist consumerism embodied in “planned obsolescence,” there was begrudging respect for the durable equipment of the monopoly behind Western Electric, Bell Labs and AT&T.

But that respect went only so far. Then new, the Merrill Science Center had a science library, and behind an open counter was a librarian’s desk with a telephone. The phone had a rotary dial with a keyed lock in the first finger hole, to keep students from making calls when the librarian wasn’t there.

But science students are curious and social; we quickly discovered that you could generate the signal pulses of a rotary phone by rapidly depressing and releasing the switch hook. A few dozen properly spaced taps would connect to a four-digit campus extension. Nine taps in less than a second would get an outside line.

Meanwhile, across the continent, at a small elite liberal arts college on the other coast, Steve Wozniak was making “blue boxes,” and Steve Jobs was selling them. Those devices were for cadging free phone calls on a more ambitious scale. Our coterie of Amherst gentlemen limited our phone phreaking to overcoming a minor inconvenience and proving a point.

Back to computing. Besides an inadequately protected phone, Merrill also had some computers. One was a Wang 300SE scientific calculator for use by students. It was as big as a desk, but it could perform rapid scientific calculations including natural logarithms and trig functions. Amazingly, its computations were based on analog circuits. (Today, even a rice cooker uses digital, as opposed to analog, logic.)

In 1970 the Wang calculator was actually a big deal. Texas Instruments had developed the first handheld calculator three years earlier. But that TI machine could only add, subtract, multiply and divide. With the Wang calculator, evaluating the square root needed to compute the standard deviation for lab observations became trivial instead of tedious, and calculating the altitude and azimuth of a celestial object without massive printed tables became possible, although still daunting. (Then as now, raw calculation offered no help with tough assignments, such as analyzing physics Professor Bob Romer 52’s dreaded Wheatstone Bridge out of equilibrium, a conundrum that still haunts engineering blogs on the Web today.)

Merrill also offered access to a real digital computer. In a windowless room a melamine desk sat between two devices that looked like Selectric typewriters—replete with inked ribbons and removable type balls with glyphs in high relief. Those terminals looked like typewriters because IBM 2741 computer terminals were modified typewriters. They connected to a timesharing computer at UMass through a then-state-of-the-art phone connection that was about 50,000 times slower than a cable Internet connection.

Researchers at Dartmouth had invented a programming language called BASIC in 1964 and pioneered the concept of “timesharing” on a mainframe computer. By 1970 Dartmouth had debuted a fifth generation of BASIC. Amherst lagged, hosting no timesharing service of its own. But members of the Five College Consortium could share time on UMass’s CDC 6600 computer. That UMass system hosted a limited version of BASIC and a scientific programming language called APL.

APL had started as a mathematical notation and was adapted to become an interactive programming language. It was never a dominant language but it remains an elegant fringe language—concise, powerful and deservedly notorious for being somewhat inscrutable. Unlike other languages, it reads right-to-left and uses a special character set with many Greek and mathematical symbols. It is so concise that it can, for example, exhaust the memory of any computer with but a seven-character entry from the keyboard.

My curiosity about APL is what pushed me deeper into the basement of Converse, venturing beyond the post office, past double fire doors into the hidden realm of Amherst College computing.

That basement Computer Center was an incongruous singularity from the very first impression. The central “machine room” had glass walls but no windows. It had a raised floor and a dropped ceiling. Its bright fluorescent lighting belied both darkness and daylight. And the over-conditioned air was maintained at steady temperature and humidity with a brittle constancy. It always smelled like no place else on earth (except, as I would discover later, every other machine room on earth).

On entering, one encountered a voluble background of keypunch chunking, terminal clattering and card-reader whirring punctuated by the splutter of an impact printer. Disk drives softly moaned as, inside each drive, one tiny “read/write head” buzzed like a dancing bee, crawling backwards and forwards as it paced off distance, before depositing or harvesting bits of data on the spinning sectors underneath. Sporadically, a pen plotter twitched and soared with the soft resonance of a slide whistle on downers. Even the thrum of the air handler changed pitch with air pressure as the door to the machine room opened and closed.

Strange to tell, I soon found the edge of that acoustic chaos to be one of the few places on campus where I could hear myself think.

The computer system that occupied that machine room was an IBM 1130 with a 1403 line printer, a 1442 card read/punch, a 1627 drum plotter (actually made by Calcomp) and a 2310 disk with a capacity of 512K words. (“Words” were the prevalent units of measure at the time.) Our removable disk held one megabyte of data in a cartridge about the size of a pizza box.

The 1130 system had 16K words of magnetic core memory. Memory was manufactured by stringing tiny doughnut-shaped magnetic beads, the “cores,” in a three-dimensional wiring harness. Someone, almost certainly a woman in Poughkeepsie, used a needle to string the beads by hand. The resulting 20th-century wampum was pricey—about 100,000 times what equivalent semiconductor memory would cost today. With traditional wampum of the Massachusett and Nauset peoples, a bead was said to represent an individual memory while a belt of strung beads recorded a significant event in history. So it was with the modern wampum: a precious belt of hand-strung beads recorded in memory the bits and bytes of our software exploits.

First released in 1965, the IBM 1130 was a modest machine, suitable for a small liberal arts college—perhaps the five-year-old Chevy Lumina of computing. Both Williams and Smith had 1130s. Ours was soon upgraded by doubling the main memory and adding a second disk drive. And in about 1972 ours got a game-changing enhancement: a monochrome Tektronix CRT for vector graphics.

It’s a challenge to even compare that system with today’s machines. Moore’s law (an enduring rule of thumb which states that computing power doubles about every two years) indicates that 40 years later a comparable device would be 1,048,576 times more powerful. That seems about right.

While the changes in hardware boggle the mind, the art of programming is not so different now as then. This morning I was developing an application for the new iPad, and this afternoon remembering the IBM 1130. Techniques learned in the basement of Converse Hall are valid a lifetime later. But in 1970, for most of us, these were utterly new ideas. For those who were susceptible, the experience of programming was a tantalizing puzzle, which could grow to become a profession, a passion and sometimes an obsession.

Prior to computers, aspiring to elicit complex actions from inanimate objects with nothing but words belonged in the fanciful realm of sorcery. In a way, computer code is a kind of sorcerer’s spell: on a line printer we triggered 132 tiny hammers to hit slugs of type on a whirling chain to spell out words across a moving page of paper. It did seem like magic.

In the shadow of the Robert Frost Library, we also glimpsed that code is poetry. Clarity, concision, elegance and power are valid metrics for C functions as for couplets. We sought, and occasionally found, the exhilarating fulfillment of le mot juste. And what could be more gratifying than being able to decompose an incomprehensible problem into a solution made entirely of familiar building blocks of self-evident code? As the written word extends memory, programming extends reason. Surely, this is the stuff of a liberal arts education.

Surely not. Amherst pointedly offered no computer science class for academic credit before I graduated in 1974 (with a bruised and battered B.A. in mathematics). A computer science department wouldn’t be formed until 1990. While we couldn’t get credit for learning to program, or for traversing the rich field of knowledge that has become computer science, more than a few of us did it anyway.

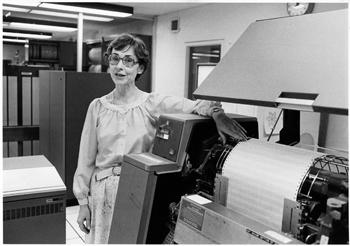

For me, it started with a few tutorials in APL. These were offered by the coordinator of the Computer Center, a sparkling woman rumored to have come from Bell Labs. She seemed more poised and slightly older than us students but younger and decidedly less formal than the faculty. She was a professional and very much a female, which at Amherst in 1970 was a rarity. She had a breezy demeanor that belied a dangerously sharp intellect. And her laugh lit the Converse basement.

I sat with Betty Steele while she patiently enumerated monadic and dyadic function operations, first on scalars, then on vectors and multidimensional arrays of data. She explained meta-operators (such as one that combined sequences of basic operations like addition and multiplication into a single choreographed step) and, finally, user-defined functions.

It took about three hour-long sessions to cover the basics of the APL language. Betty patiently introduced me to recursion, indexed arrays of higher dimensions and enough other concepts and terms to get me started with programming. More than Gödel’s Incompleteness Proof, more than the structure of a Beethoven sonata and more even than the poems of Philip Larkin, programming blew my mind. I was able to drink in effortlessness and perfect execution in an imperfect world, and it was intoxicating.

Between classes, I’d scurry back to Betty’s office, where she basically held an ongoing informal seminar all day long. It may not have been Paris between the Wars, but it was a time and place where something new and important was emerging, and a few quirky people were leading the discovery. It was intellectual without being academic. It was a scintillating effervescent mélange, a perfect “culture medium” for ideas.

Betty conducted occasional noncredit courses in APL and Fortran (a scientific programming language that accounted for much of the use of the 1130), but mostly she seemed to keep the center running smoothly by holding court. Petite and birdlike with a distinctive alert posture, she sat enthroned behind a desk in the small office across from the machine room. Her head would turn to see who was coming and going. She knew us all and had a welcoming smile for most. A constantly changing handful of student programmers encamped in her office between classes.

Some wandered off to bang out cards on the 029 keypunches (even those dinosaur devices were crudely programmable). A few worked solo at APL terminals. One or two at a time were hands-on at the 1130 console. (Sometimes their fingers undulated like pianists’ as they toggled binary sequences into memory through the 16 tiny lever-switches on the front console, if only to confirm their fluency in converting between binary and hexadecimal notation.) But everyone meandered in and out of Betty’s office at least to say hello and goodbye.

For those of us perched on the floor or in chairs in her office, it was impossible to predict the path of conversations in advance and just as difficult to chart them afterwards. Somehow the more knowledgeable programmers revealed how things worked to the rest of us; we challenged and countered and generally struggled to advance the state of the art.

Eventually someone would lapse into a funny story or espouse a personal opinion, and sooner or later the conversation would devolve into arguing or whining about some unfortunate state of affairs in life. Betty would broker the exchange of ideas, laugh generously at the funny stories, referee the arguments and usually cull out the whining by informing the speaker that the discussion had become “booooring.” She did it with good humor, a sparkling wit and the crowning grace of a doyenne: the very best people naturally congregated around her.

And we had the best people. Several of the smartest students I got to know were regular fixtures at the Computer Center, and they were resident experts on the 1130 system. Bob Bruner ’73E was a serious mathematician before he had his bachelor’s degree. His predilection was LISP, still a premier tool for artificial-intelligence research; he was among a very few to ever run LISP on the venerable IBM 1130. Bruner was self-reliant, rolling his own cigarettes, proving his own theorems, building his own software tools. Maybe he’d have foresworn his rusty red ponytail if he’d found a way to cut his own hair, but probably not.

By the time I met him, Bruner had adroitly hacked the operating system of the 1130 to inject the words “Cogito Ergo Sum” at the end of every printed job. This was meant to be an innocuous and wry bit of showmanship, like capturing Sabrina without destroying any property. His hack worked remarkably well, until one weekend when the formidable Professor Romer was trying to load a program from another system and was thwarted by an unintended interaction with Bruner’s code. (Betty dutifully held Bruner to account.)

APL held, for me, the loyalty of a first love. But Tony Wingo ’73 dismissively disparaged it as “okay for an interpreted language.” For him, Assembler and especially compiled languages like C (which we didn’t have at Amherst) offered better access to the “bare metal” of the hardware—where the power and performance was.

Steve Goff ’73, a mercurial intellect who was smarter than everybody about everything, mischievously championed cleverness and creativity. Goff had taken a reference manual home after freshman year and memorized it that summer. He established himself as an expert in machine language. He used his knowledge to code a replacement macro assembler, a fundamental software tool that enabled programmers to write machine language instructions with the benefit of mnemonic opcodes, symbolic labels, comments, formatting and other essential conveniences. Goff’s labor of learning, for which he was not paid and received no academic credit, produced an assembler that had more features and ran many times faster than the commercial product IBM had provided with our system. We all used and preferred Goff’s assembler.

In the summer after his junior year he stayed at Amherst to work on his biophysics thesis. As he tells it, he “never got around” to renting a residence that summer, so he camped in his lab, in his office and sometimes on a sofa in the Computer Center.

Master chemist that he was, it must have been Goff who made it possible for someone else to sprinkle drops of a solution of ammonium nitrate around the machine room floor. Where it dried it left tiny crystals that exploded when you stepped on them. This was very amusing, except that Wingo was often barefoot.

Wingo was California trapped in Massachusetts: long hair, bare feet, wispy beard and a mellow attitude tinged with impatience for people he thought were foolish or ignorant. Most of us were that. Wingo valued earthy candor over social graces or propriety. He routinely propped his bare feet on the edge of Betty’s desk, and she routinely brushed them off.

Wingo had credibility; he had worked on a PDP-10 at the Brookings Institution. It gave him a breadth of experience; connections; and a multi-platform, multi-language perspective that most of us lacked.

It may have been Wingo, or maybe Goff, who had seen an article in Rolling Stone in 1972 about an early proof-of-concept for a CRT application at MIT. It was a two-person shoot-’em-up game called Spacewar!. When we got a CRT for the 1130, Bruner was hired to replace the system software that drove the display (the device arrived too buggy to use). With Bruner occupied, Goff and Wingo started building a version of Spacewar! for our system. I don’t know how far they got or how they divided up the labor, but as the brains of the Class of 1973 began to anticipate life after college, Douglas Weber ’74 stepped in to bring the project to completion.

Douglas was already an accomplished and prolific programmer. Unlike Bruner, Goff and Wingo, who could be addressed by first or last name around the center, Douglas was always “Douglas.” He wore shoes. He didn’t have long hair. His goatee was neatly trimmed. And he was often in a trench coat, carrying an umbrella, as if he had stepped out of a British spy movie. Like his predecessors, Douglas had learned the 1130 operating system inside and out. And without setting out to do so, Douglas is the one who blew open the doors of the Computer Center.

He labored on the Spacewar! project, perfecting the dynamic visual model of gravitation and Newtonian forces in a torroidal universe. He worked for weeks to get the orbital simulations to be fast enough for our limited processing power and to eliminate computational singularities (such as division by zero or intermediate calculations that exceeded 32,767). In early versions of the program, such singularities caused moving displays to freeze or suddenly reverse.

When he finished, he had completed the hand-coded 1130 version of Spacewar!. It played about like tag on ice, but with jet packs. It had the essential features of Asteroids, a commercial arcade game released by Atari in 1979. In the world of computing, half a decade of lead-time was—and still is—a lifetime.

The game was ideal for two players. Spacecraft, shaped like the Greek letters delta and tau, orbited a central star and could shoot at each other or use thrusters to change angular and linear velocity. Douglas was good as a player but much better as a programmer.

I still remember one technical tour de force. Executing any program on the 1130 was generally neither simple nor self-evident. At a minimum, it involved running a deck of punched cards through the mechanical reader. And Douglas’ card deck grew to be as long as an arm; it had to be loaded in sections. The risk of getting cards out of order was high, and the consequences egregious.

With some ingenuity, one could store a pre-compiled image of a large card deck onto the disk and then use a much smaller card deck “loader” to bring the card image into memory for execution. But even the loader required several coordinated steps and a small but fragile card deck.

So Douglas found a characteristically clever solution that wove together several bits of deep knowledge about the system’s hardware and software. We all knew that pressing the console “reset” button preserved the contents of memory but cleared the Instruction Address Register. And many knew that memory location zero wasn’t utilized by the operating system. Douglas saw the opportunity. He put a machine instruction into location zero. It was hand-coded to branch to an unused chunk of memory where he had stashed a preconfigured call to a disk-loader function. That loader pulled in his program image from disk and transferred execution to it.

The effect was dazzling. If someone simply walked up to the console and pressed “reset” followed by “start,” the system would drop everything else and immediately begin running Spacewar! on the CRT. It suddenly put an engaging and free video game into the hands of about 1,243 college boys who had never had one before.

Humanities students who previously never knew there was a computer in Converse would come to play the game. Many times, strangers walked in and unwittingly reset the computer, abruptly wresting control from any executing program—sometimes invalidating hours of serious processing or reams of printing. The dedicated programmers could foresee the beginning of the end. We gave Betty a special program so she could disable the instant Spacewar! feature during business hours.

When Douglas graduated in 1974 he deservedly won the Dictionary, a prize awarded by Betty to the graduating student who most excelled. (There was no second prize, but I also received a dictionary, perhaps because Betty and I had become good friends. I still value the dear dictionary and treasure the friendship.)

Before Spacewar!, most of our classmates had no inkling of what was happening in the Computer Center. The few who knew were the few who lived it. And the alchemy of that moment in our microcosm mirrored the explosive emergence of computers in American culture. It is only in looking back that we can see the pivot point, both in our lives and in our culture. We were privileged to transform a technology, and in so doing, we became transformed ourselves.

Bruner and Goff remained brilliant academics. Bruner is a professor of mathematics at Wayne State University. He used his LISP in the 1980s to perform some notoriously difficult mathematical calculations, and his program evolved into one that is still used by a handful of algebraic topologists.

Goff is the Higgins Professor of Microbiology & Immunology and Biochemistry & Molecular Biophysics at Columbia. (Among his distinctions is an honorary degree from Amherst in 1996). He spends his time unraveling genetic codes for HIV and leukemia as he once raveled assembly codes for the 1130. He cites the camaraderie of the Amherst Computer Center as the model of the working environment he fosters for his students in labs at Columbia, and he recalls his time in the Computer Center as his defining experience at Amherst.

Wingo and Douglas carved out professional careers in Silicon Valley. As a senior software engineer, Wingo left his mark on Apple networking, Google spam filtering and various system-level projects involving I/O drivers and other real-time code. He retained his proclivity for system-level programming in languages like C.

Douglas has been an IT consultant and a senior software architect, and he remains a senior contributor at a San Francisco Bay-area firm that builds products in a wide range of application areas. I don’t know if he maintained his expertise in video games or space simulators, but I smiled when I saw that the company he works for is called Rocket Software.

Almost four decades after graduating, each of us remembers the extraordinary freedom we enjoyed at the Computer Center and how we were coached and cajoled into being trustworthy stewards of an important community resource. Betty Steele (now Betty Romer; she and Professor Romer married in 1994) was at the heart of it all. In many ways she was the heart of it all. Some days she was an inadvertent den mother. At other times she was a cagey collaborator, rewarding productive creativity and turning a blind eye to some insanely great (albeit usually ill-fated) caper in the quest for the ultimate software hack. She unerringly afforded us the maximum creative freedom—any more and we would have collapsed into chaos. She earned, by degrees, our respect, appreciation, admiration and trust. For the core group at the center, those melded into a lifelong affection and loyalty.

Betty says that soon after 1974 everything changed. The introduction of word processors, the move to Seeley Mudd with a newer generation of computer, the formalization of computer science as an academic discipline and the changing role of technology in society made campus computing much more mainstream. Like her retirement from the Computer Center in 1996, it was a natural and inevitable fulfillment. But it also marked the end of our very special moment in time.

John B. Bennison is a retired software developer who has sent more telegrams than tweets. #EarlyAmherstComputing

This article is copyright John B. Bennison, 2012, and is printed with permission.

Illustration by Shaw Nielsen